Is AI taking over social science research? Part 2

Navigating the jagged frontier from a qualitative perspective

Will AI help with this?

In contrast to early hopes that AI may become the great leveller in terms of access, the interaction of technology and professional stratification is rarely that straightforward. A recent HEPI report showed that students are sharply divided on whether AI contributes to their learning or hinders it. I find this polarisation not at all surprising, and we are already seeing it with academics.

Knowing how to use AI well, which tool, and for which tasks, is still in the exploration stage. And right now, the debate is dominated by the quants researchers.

With the toxicity of this debate at an all-time high, I agree with Kustov that AI disclosure is simply not viable right now. I heard from a colleague (mostly quants, some mixed methods) that they had something rejected for disclosing their use of AI in a responsible manner. And I do not doubt it. It’s the vibe, and even when a journal has an AI policy, you risk having your work dismissed even if you used AI responsibly and checked everything (which usually takes so long it often negates the purported productivity advantage).

In the BAM AI White Paper, which I co-authored, we called for responsible AI disclosure. But in our recent podcast, I was a lot less confident about that:

So, how well you can use AI will increasingly matter, and it will also make all the difference whether people can tell that you used it or not.

Knowing how to navigate the jagged frontier

One of the best examples of this is Adam Kucharski’s recent blog “How much time did past Adam waste?” — turns out, not that much. And this is for an Excel-based task, where you would think AI tools have an edge:

“What is hard is working with fragmented, incomplete, inconsistent datasets and coming up with clever methodology to tackle genuinely new research questions. And I’m yet to see evidence that common agents are about to takeover in this space. Even if I really do wish they could have saved me all that time a decade ago.”

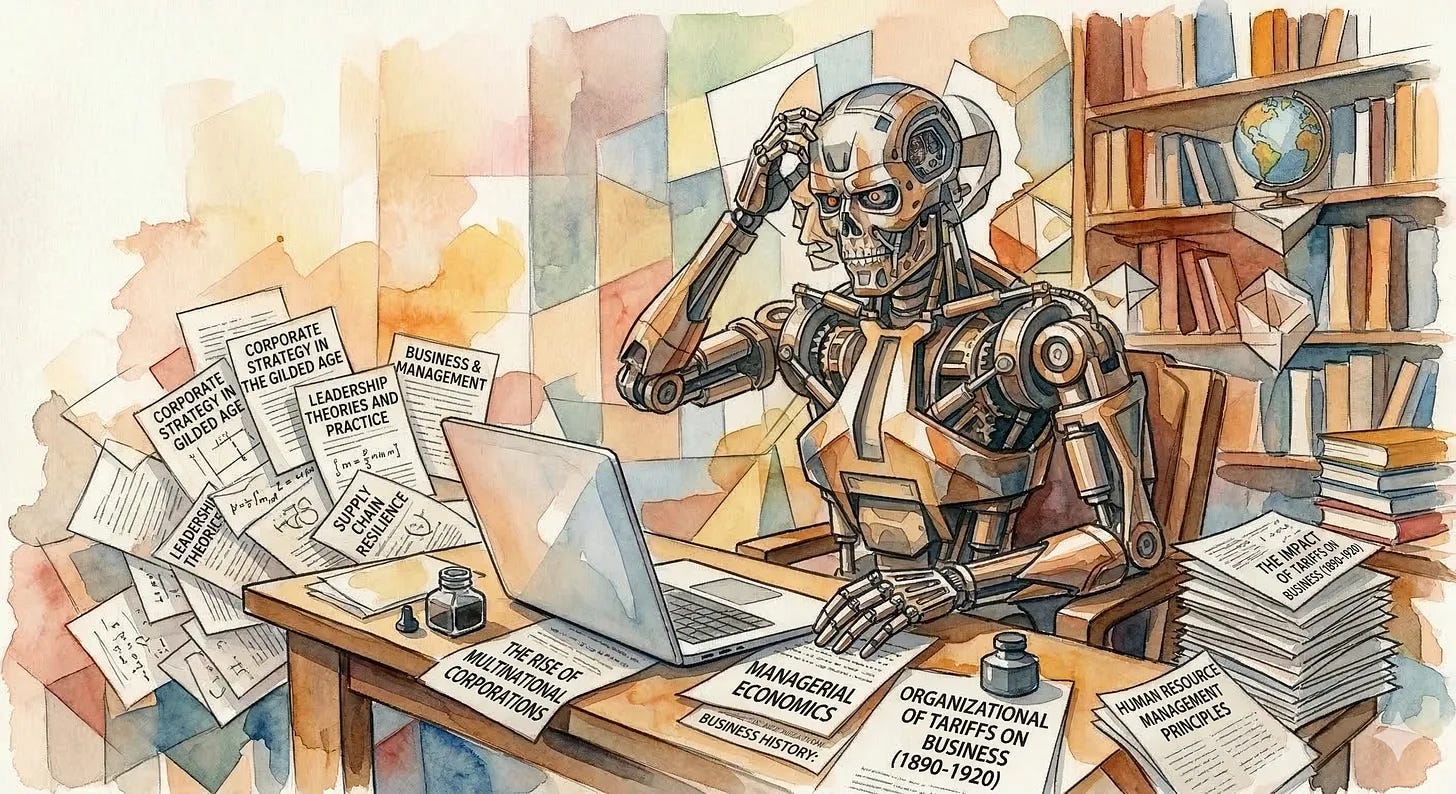

I similarly have tried various AI tools over the years to see if I could really short-circuit one of the tasks that qual researchers most frequently outsource to the rare research assistant who may come along on a grant or through a co-author:

The structured literature analysis

I have a particular set of articles tightly clustered around an issue, fewer than 50 in number, from which I want to extract very specific information. Originally, I tried Elicit, roundabout 2023. The results were disappointing, and the tool seemed to be set up for medical researchers or similar. Now, with Claude Cowork at the ready, I decided to tackle this again, even though I have already done all the work manually. So it seemed an optimal test along the lines of “how much time could past Stephie have saved?”

Again, not much, as the result returned some egregious errors, which led to a longer conversation with Claude about how it works. In summary, text extraction at volume remains a challenge for context windows, and when the information is not on the first few pages of long academic articles, Claude defaults to “inference” based on training data and known outputs by academic authors.

What I did learn is that my instructions and the memory file for Claude need to include explicit instructions to mark where inference was used and flag for human checking.

If I can get a human research assistant for this task, that would still be superior to Claude. (Sorry, Claude.)

The rare qual voice

Over at Unpublishable Papers, an anthropologist’s view on AI brings up some pretty key issues for any business and management scholar:

“But artificial intelligence has still not changed much about the time and effort that goes into theoretical labor. Theory is still expensive. …But theoretical labor was always the important part.”

Which perhaps sums up why most business and management scholars remain quiet (and smug), even though most research is, in fact, quantitative. But our field is apparently not as empirically driven as the many concerned political scientists and economists (who generally think of themselves as perhaps somewhat superior to the upstart business schools).

The other social sciences, perhaps, sometimes wonder:

“But if theoretical labor is the important part, why don’t we instead have a scientific world maniacally focused on the stakes above all else?

Well, newsflash, everyone, this is what we are maniacally focused on ABOVE ALL ELSE ALL THE TIME.

Not least at the top journals. Sure, there are plenty of low-hanging journals that take the other stuff. They were not particularly influential before. Some significantly expanded volume before the first AI tool was ever launched to make money from the shift to open access. In this ecosystem, the potential for AI to produce work to significantly shift the field remains limited.

Will AI's affordances topple the dominance of theory in business and management? Well, probably not, and for the first time in ages, I think this may be a good thing. If we are lucky, it may even broaden and change the definition of theory, though I am less sure about that.

The only real challenge now is reading smoothly written, well-presented academic prose that even occasionally features a short sentence for impact or distraction, but there is no “there” there.

Of course, being able to write in a specific fashion has long been a shorthand in the field for “quality” (otherwise known as having been trained at the right schools, which are US American or trying to be). But even that, LLMs do not always fully manage. Or, indeed, their users have failed to set up their system correctly because they themselves are unaware of these conventions. Or just very bad at using AI…

Academic Publishing

Now this is where the crunch of the debate is… Whenever I see someone in business and management vociferously complain on LinkedIn (and probably elsewhere that I am not) that they were just sent an AI-generated manuscript for peer review with hallucinated references, I feel:

Sorry for the editors, who don’t have the time to check at this level and who are given no support in dealing with an avalanche of AI-generated dross (and who are generally unpaid beyond an honorarium in our field).

Angry at the publishers, who make a lot of money, and push their homespun new publishing systems at us with their endless problems, just because they now want to make money from data as well, PLUS licensing OUR writing to AI-companies, and then completely fail to purchase other AI systems that could just scan incoming manuscripts for hallucinated references (yes these firms exist, see for example GroundedAI). OK, breathe…

Exasperated with colleagues reviewing these pieces and vocalising their discontent with the moral superiority common to academics. Not because they are annoyed with hallucinated references. But because they then feel they can spot every instance of AI-generated work. You cannot, OK? You can identify the incompetent users at best.

That’s the ground-level experience right now.

Economists, of course, like to take the high ground. They also, it turns out, make their successful paid (ok, probably the archives function), so if you want to read it for yourself, you will need the 7-day trial. I really liked Scott Cunningham’s post, though some aspects really made my eyes roll madly in their sockets.

The first one was this:

Clearly, there is no scarcity in potential output space. Economists may think so, but that reflects distinct disciplinary institutions that reinforce a potentially even more hierarchical journal structure than the one in business and management. Since nobody properly pays editors (though this varies by field), you can hire them just fine. Editors are dirt cheap. The constraints are how many more papers we actually want to publish and whether we can effectively expand quality control.

At a relevant meeting last week, there were some general statements that publishing 1,500 or indeed 2,000 papers per year is clearly not aligned with quality control. It’s a generous assessment; even with a large team, I’d think the boundary for any one journal would be lower. But Cunningham’s argument is about a range of journals, and, as a larger field, business and management has many more of them.

So the idea that there is a limited, not at all dynamic pool of slots being targeted by authors, AI-assisted or otherwise, seems fanciful.

I agreed more heartily with the challenge of human peer review.

So, is human peer review dead? Probably. Is that such a disaster? I totally agree with Scott Cunningham, and so do others like Dave Karpf (now over at Beehiv). Karpf goes further, arguing it is the eulogy of the social science paper. By and large, his argument amounts to Goodhart’s law: we need to consider whether we are measuring the right thing for tenure, promotion, appointment, etc.

“Peer review was already stressed to the breaking point. It DOES NOT SURVIVE when a young researcher can have Claude Code produce a lit review, gather data, conduct a regression analysis, and slap on a passable discussion and conclusion section. Of course we will be flooded by AI-written/researcher-lightly-reviewed articles. Of course peer reviewers will either opt out of the (voluntary, thankless) labor of offering genuine feedback, or will have Claudebot heavily “assist” them in reviewing.

And this is a serious problem for Hiring Committees and Promotion & Tenure Committees. Universities are slow, lumbering bureaucracies. This is an appropriate time for them to freak out and start adjusting to the “death of the journal article.” They’re measuring the wrong thing. They will have to start measuring something else.

That brings me to my second point, though: good riddance!” (Dave Karpf)

What he does not provide is a blueprint for an alternative system. It just turns into another “woe is us in political science, the job market is so bad”. I am sympathetic, but you know? So what?

The economists seem hell-bent on raising submission fees even more as a deterrent, with little consideration for the well-known problems with that approach. Also, what about the rest of us, where submission fees are not accepted? And frankly, if a fee-charging journal would ask me to review for them FOR FREE(!), I would tell them where ot stick it.

So, we are all heading headlong into the academic interregnum:

“The old world is dying, and the new world struggles to be born: now is the time of monsters”. (Antonio Gramsci, Prison Notebooks, c. 1930)

As far as monsters go, I quite like Claude Opus. And you know what I do now when I read something I am fairly sure is mostly AI-generated, with little to no human academic input?

Sorry, this last bit is paid subscribers only ;-) A bit of a personal comment and a “rattle bag” of some great readings out there!