Marking in the age of AI - Part 1

On moral panics and Bloom’s taxonomy

I am marking at the moment. And it is quite an interesting experience (for a change, you might say). Marking in the age of GenAI is very different from before. This is the first time I have assessed student work since spring 2023, because I had a senior leadership role in between. ChatGPT had not been out that long when I was last marking. It was creeping in, at least as an educator, you were getting suspicious.

Two years on, this is different. There is no more plagiarism in students’ work that Turnitin is picking up on. The English is clear and comprehensible -- notably even in the case of students who can barely make themselves understood verbally. So yes, this is another post about AI (sorry, other stuff coming soon).

But it is difficult to escape right now, and it does feel like we are living through a major Zeitenwende – when the tides of time are shifting, and the afterwards does not at all look like the before times anymore. It is a strange feeling to know that we are living through one of these moments that we will all look back on as a profound marker in time. But to be fair, we should be getting used to it. We’ve had a few since the millennium.

Moral panic ahead

But then, is it as big a moment as so many blogs, articles, and punters make out? In the last few weeks, the debate appears to have been dominated by the New York Intelligencer article, which, full disclosure, I have not read as I do not have a subscription. However, I feel like I have, given the amount of commentary I have seen on it on my feeds…

It’s somewhat baffling how they only ever pick up on things in US Media YEARS after the rest of us in other English-speaking countries. They then launch into a conversation that quickly dominates the (metaphorical) airwaves and goes through much handwringing about problems and solutions that just feel a little… 2023?

Let’s recap – students cheat by submitting work created by generative AI tools, professors know but cannot do anything about it because there are no tools to identify AI use reliably.

AND it is the end of Higher Education AS WE KNOW IT!

There seems to be a whole move to outright ban the use of AI, to go back to in-person handwritten exams — all of which is

logistically impossible,

questionable preparation for an increasingly difficult job market, and

raises some serious pedagogical questions about what we actually test in exams.

I don’t know what the reality for our US colleagues is, though I suspect much the same as ours (though given the A1 Steak Sauce debacle, maybe not quite). We had extensive debate and training in 2023, and also in 2024, and the UK’s Russell Group universities agreed AI principles that encourages responsible use and disclosure.

Of course, some colleagues are viscerally opposed, while others lead on best practice adoption. In contrast to many others, our university has an explicit policy and a submission form that asks students to disclose whether they used AI in the approved manner.

Surprise, surprise, many don’t fill this in or tick the “no AI use” when the smoothness of the prose puts a massive question mark over it. The stigma of using AI is still very high, and students are perhaps realistic that they may be marked down if they admit to using it.

How do students use AI, and what is that telling us?

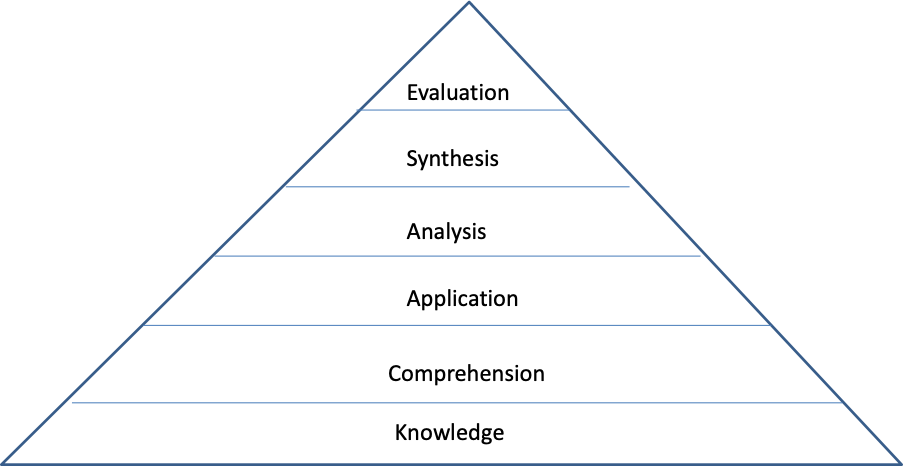

While alarmism always gets more clicks, there were some far more interesting contributions out discussing HOW students are actually using AI. Both Anthropic and OpenAI released education reports that showed that students use these tools for analysing, thinking and self-teaching. Anthropic refers explicitly to Bloom’s taxonomy by highlighting that students often (though not always) seek assistance with the higher-order elements of learning.

Two recent posts here on Substack did go into the implications of these findings to challenge the current alarmism: Annette Vee at AI & How we are teaching provides a good overview of recent studies on students’ use of AI beyond headline-grabbing anxiety. Beatrix Law’s A Letter to the Anxious Reader is equally critical of the media focusing on cheating, and offers a teachable model of prompting for writing and revision for students.

Her piece is capped off by an AI disclosure at the end that reflects on the energy use for a short piece. AI may be faster and almost as good as a human writer, but I really don’t need over 14 litres of water to produce just over 2,000 publishable words (but do see my own disclosure on my resource needs).

Hello Mr. Bloom! Bloom's taxonomy outlines how different forms of intellectual skills build on each other and provides a guide for instructors in developing their teaching and assessment.

Bloom, B. S. (1956). Taxonomy of educational objectives: Cognitive and affective domains. New York: David McKay.

What both of these excellent posts have in common is that they approach “AI as Normal Technology” — which is also the title of a great new article by Arvind Narayanan and Sayash Kapoor, in which they extend their ideas from AI Snake Oil (the book, though it is also the name of their newsletter).

Like any technology in history, adoption is always both more transformative and more challenging, and therefore inherently slower, than the initial innovations themselves. And there are wider institutional frameworks that they refer to as speed limits: “AI diffusion lags decades behind innovation. A major reason is safety—when models are more complex and less intelligible, it is hard to anticipate all possible deployment conditions in the testing and validation process.”

Machines of Loving Grace

That seems to be a far more realistic take on what they call the innovation-diffusion lag than what Dario Amodei, who founded Anthropic with his sister, wrote in his essay Machines of Loving Grace about what the future with AI will look like. Especially the final sections, which stray into social science (and he admits he is not as confident in his conclusions here), he presents a worldview in which the benefits of AI diffuse without any great obstacles and in relatively equal distribution.

The cognitive theory-in-use here appears to be a diffusion model of a gas in a vacuum, and it is not really being examined closely by those using it (not just Amodei). Because if it were, it is clear that society is not a vacuum, so even if innovation had the diffusion properties of a gas (and it is debatable whether any aspect of this metaphor holds), it would not distribute quickly and equally.

Narayanan and Kapoor’s innovation-diffusion lag sounds like a more realistic scenario, but to any organizational scholar, this is still a flawed model. Technology that can be patented may be diffusing through industries globally, but the technology-induced transformation of organizational practices and business models is different still, because, as many studies have demonstrated, the transfer of these ideas occurs through complex translation from one context to another.

And while they use the term diffusion, their argument is actually one of translation. This is especially true when they go back to historical examples such as the impact of electrification on production, which did not bring the expected efficiency for decades: “What eventually allowed gains to be realized was redesigning the entire layout of factories around the logic of production lines.” Technology adoption is translation, not diffusion, which requires learning, experimentation, consultation and creation, all of which take time.

It's not in the interest of the AI companies to push this far more reasonable discourse on new technology. Because they face a dilemma that is also not at all new: striking an appropriate balance between exploration and exploitation. The first costs money (billions in AI model development), the second makes money.

Adoption in companies does not appear to be fast if the Financial Times is to be believed. Helping students cheat on their coursework is not a viable business model for the long run. We are moving inexorably towards the exploitation phase when the initial investments in exploration need to be recouped, and as a business researcher and business historian, I am very curious where this will lead, because once the tide goes out, etc., etc.

Next week, this post concludes with the questions for academia and the difference AI makes to our assessment approaches.

Full disclosure: Text produced by one fully artisanal human (with some grammar checking by Grammarly); energy requirements: 2 cups of tea, 4 coffees, a light lunch and one slice of cake, one round of playing ball with the dog. And several months of reading and listening to podcasts about AI, plus several days of marking postgraduate assignments.