No, it’s not April Fool’s! I’m releasing one of my popular posts from earlier in the year for all readers — hopefully with some good ideas for you to explore what you can do with the new AI tools!

Did we know we needed a handheld mini-computer that fitted into a pocket and also served as a phone before Apple released the first iPhone in 2008? Not so much. Did you know you wanted to code your own websites and apps? Well, I watched “Whizz Kids” in the 80s (and just so you know, it took many years for a programme to come from the US to non-English-speaking European countries, so I am not THAT old), so… yes, I always did. Not enough to actually learn coding, but with hindsight, that looks like a good choice.

So, after listening to one of my favourite tech podcasts over the snowy weekend, I felt inspired to give vibecoding another go. (They are calling it the Claude Code moment, and I’ve been a bit of a Claude fan from the beginning.) Also, the FT’s AI newsletter, interestingly enough, focused on how AI will change the way social scientists work. I think the sub-text here was quantitative social scientists (it’s the FT, after all, even though they do the occasional bit of qualitative work).

Nevertheless, their point was that it was making working with data easier and quicker, including laborious but thankless tasks such as data cleaning. While there is a fierce debate about the possibilities of AI for qualitative research, and specifically “qualitative coding” (which means identifying themes, not writing computer language here), this type of work has more straightforward quality standards and is very obviously the kind of task a researcher would want to outsource.

The Projects

Who has won the Henrietta Larson Prize in BHR?

I thought this would be a straightforward task to check AI capabilities. Well, turns out, it is’t. Only an incomplete list exists, and AI tools differed greatly on how well they rustled up alternative sources from the depths of the internet.

And guess who is the only academic to win the Henrietta Larson Award twice?

Yes, it’s me!

Worth noting that there are currently no records of who won the preceding Newcomen Article Award, as the Henrietta Larson was called prior to 2007. I emailed BHR about that. They don’t know either, but may find out. But even then, I am currently the only one to have won the Henrietta Larson twice. As a paying subscriber, you get the full list (cross-checked and confirmed by BHR).

The tasks that I tested AIs for were data searching and data validation. The tools were Claude and Gemini, and their performance was noticeably different.

The personal website, aka the unnecessary vanity project

(Which I really need to update to highlight that I am the only author to win the Henrietta Larson Prize twice. Did I mention this yet?)

My two cents, nobody needs them today with all the profiles and whatnots. Mine is a set of links to my many other profiles, and pulls in my publications through my university’s publication repository (so no additional updating). But it was really super-easy, it looks rather good, and it is completely free to host on GitHub, so what’s not to like?

The task was creating a website (duh) and I only used Claude — it was more a proof-of-concept, really. Also, not everything worked, and I am still not sure why.

A global map of business historians: the pièce de résistance

Now, this is a long-running test vehicle of mine, which I roll out periodically to see how useful these AI buddies might be as research assistants. The answer to this has long been disappointing — until now!

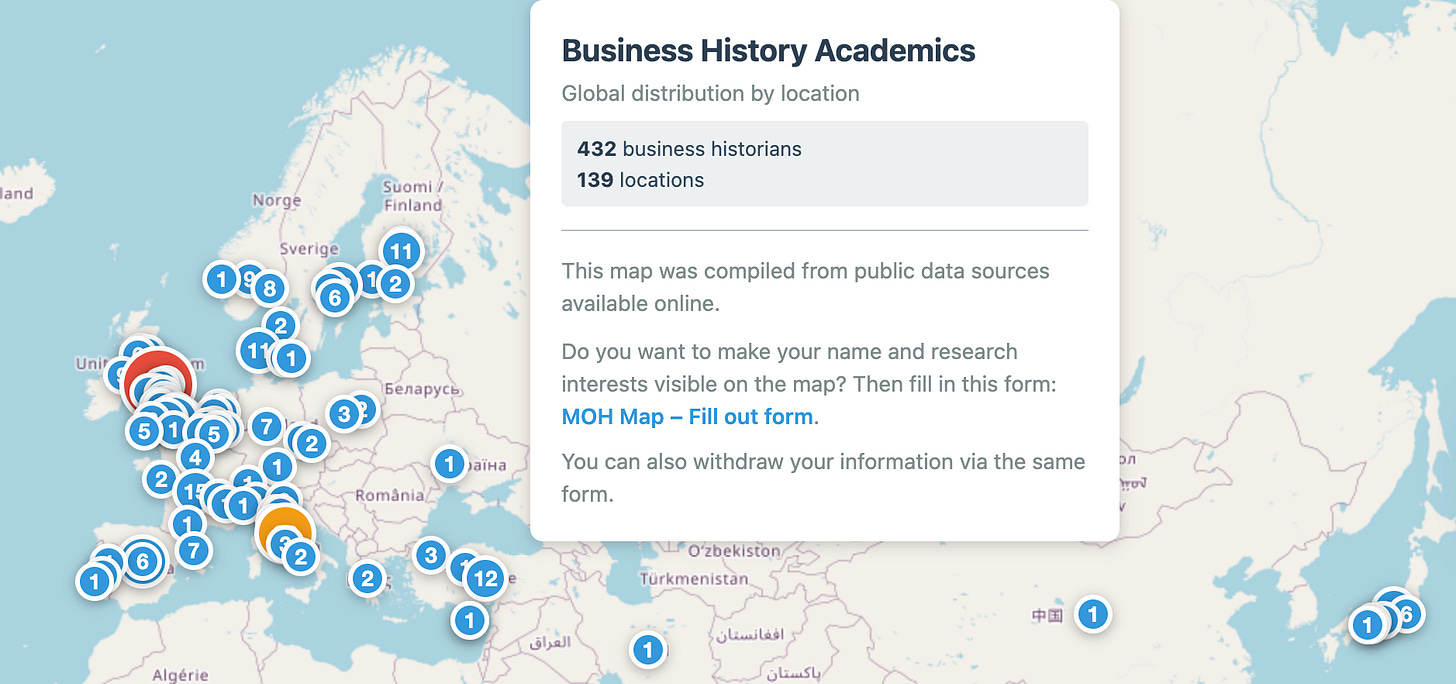

Now we have a map. It is not complete. Don’t blame me; relying on publicly available online data sources is trickier than one might expect.

Also, the title is slightly wrong — this map also includes people identifying as management history, historical organization studies and organizational memory. More about how to do this, and what this teaches us about the abilities and limitations of current AI models, after the paywall.

The tasks here were many: data searching, data cleaning, creating an intermediate output, creating a website, and teaching a clueless academic how to work GitHub. I predominantly used Claude with some Gemini and Copilot/ChatGPT thrown in. More on why below.

What is vibecoding?

It’s a fancy word for telling an AI (an LLM to be precise) to write a digital object or programme for you. Sometimes it also writes back with what you need to do or what your options are. That part can be tricky, in my experience, as Google Codespace or GitHub continues to confuse me immensely.

I like to think I am getting better at GitHub due to recent adventures — but better is compared to utterly clueless:

How do you delete something here? (AI taught me how.)

What is a branch, and how is it different from a fork? (Still no idea. I could ask AI, but I have not cared sufficiently so far.)

Now, the reasonable question you may ask is: but why?

There are a number of answers:

When you ask LLMs for anything, not even that complex, they actually write code to do the thing you asked for. So you are already vibecoding by just putting in queries.

If you want a non-human research assistant you can order around (ooh do I?!?) and have this RA do tasks for you that you would find too boring to do yourself, or which do not have high enough priority for you to really engage with.

You always imagined a bespoke piece of software that would be brilliant for your research, but which was never feasible to create. Or the AI suggested a brilliant idea you never thought of by extending into your ask. This Substack post is making this point in a much broader way.

For the most part, my engagement with AIs is focused on 2., but I have dabbled with 3. on occasion, though until now found it all not quite as advertised. So I am mostly interested in data searching, data cleaning and data validating.

1. Henrietta Larson Prize Winners

I thought this would be easy. Just a question of data collection online. How wrong I was. Claude identified a multi-year gap, and, by checking various BHR websites and article tags, I confirmed that it was entirely correct. I then went to Gemini, which did an excellent job of finding the missing dates by adding the winners from various announcements by them or their universities, or indeed notes appended to articles in institutional repositories that won the prize.

I then went to Claude to validate Gemini’s list, and Claude picked up on a hallucinated title (my 2018 article was replaced by the title of my 2022 book). Claude and Gemini both struggled with some announcements that confused the announcement year with the year for which the prize was awarded. Gemini also correctly highlighted that the Henrietta Larson Prize was first awarded in 2007, when the existing Harvard Newcomen Prize was renamed. Neither tool could find any record online for previous prize winners.

Finally, I emailed BHR to confirm that the list was correct; that there had been a previous prize; and that research on the physical back copies (or their digitised versions) was required to establish who the winners of the “Newcomen Article Award” were. Apparently, this has been raised before, so watch this space.

In the meantime, here is the only verified and complete list of Henrietta Larson Award winners:

What I learned

Gemini is great for anything requiring burrowing into the web. That is not surprising, given that it is a Google product. But in this task, and most others, I find that I regularly hallucinate. Usually once per chat. Usually, by sycophantically attributing papers to me that I never wrote (so very easy to spot most of the time).

Claude is not so great at web searches, but is way better at checking, careful in terms of what can be known, and shows the underlying data. I cannot even recall when Claude last hallucinated - I accused it of a hallucination, it apologised, re-checked and then confirmed it had been right all along — and I had to eat my words… For data validation and research tasks that require high reliability, I would always use Claude (so I mostly use Claude).

2. Personal Website

OK, that was just a bit of fun after I listened to the Hard Fork podcast, where both hosts vibecoded their own websites (which I checked out) and were raving about Claude. I don’t need a personal website, but I wanted to try it out.

Claude does vibecoding amazingly. I see why they call it the Claude Code moment (even though your average joe like me does not use the Code interface). Though I did not use the Claude Code terminal, which I found too intimidating, and just used the normal interface. Hard Fork has a little YouTube tutorial to build websites with the actual Claude Code if you want to use that.

With a few iterations, I had a website. True, the Substack feed panel did not work. But with no problems at all, Claude checked the various places where live updates of my publications live, determined how to pull my publications from my University’s institutional repository, and created a bibliography that is fed from another database and will require no updating. Neat!

What I learned

You can code most things with Code, even if you are completely clueless. GitHub gives you free pages. The era of personalised niche software has arrived, and you can do what you want as long as your subscription credits last and you have a little patience. Amazing.

Next time you need a project website, you don’t need to bother IT, get a software company involved, or pay for WordPress — just get a subscription to an AI tool.

3. The OHN Global Map

Now that was so much fun. Really, this builds on tasks I have been asking AIs to do for the past 8 months to get a sense of how fast their capabilities were improving. There was a lot of hype, and many tools were not nearly as good as the hype.

The most interesting thing for me was that AI tools are not that great at simple internet searches as one might assume. Every couple of months, I have asked various tools to create a directory of business historians in the UK. It sounds straightforward, right? We all have online profiles at our universities, and for some of us this says that we are interested in business or management history or related terms. Web-scraping technologies have been around for a long time, so this sounds like a straightforward ask.

Before you freak out, tools continue to perform really badly at this. Claude fabricated people, Gemini found an odd collection, same for ChatGPT/Copilot, and both tend to place people at universities where they worked many, many years ago (suggesting they mostly rely on ingested training data). At some point months ago, ChatGPT suggested coding a map from the directory. It was terrible.

This time round, Claude instead came up with a plan:

Use Harzing’s Publish or Perish software

Search Google Profiles

Use keywords

Export the results

This had so many advantages — it only involved people who defined themselves within the field and made this information publicly available. Google Scholar limits how much information you can request at one time, and the PoP software is designed to manage around it, but has its limits. So don’t expect to get more than a few hundred (low hundreds). Basically, if you did this for a large field, it would not work very well.

After some trial and error, I could export a list to Excel, but it required a lot of data cleaning, like separating first and surnames, etc. I started doing this manually, but with different types of entries and international naming conventions, it was just mind-numbingly boring.

And then I thought — hang on a minute… didn’t the FT’s AI Shift say AI was great for data cleaning? I gave the job to Claude, who fixed it beautifully. The remaining one or two mistakes were easily fixed.

I then had it merge some of the old searches into the document. I asked Claude to validate and check on individuals — that seemed to be trickier for an AI tool. I did some manual checking, but by no means all.

Then I asked Claude to code the map and tell me how to put it online. It explained how to work it on GitHub, and like magic, it actually did. Even though I barely understand how GitHub works.

When I decided to contact a few colleagues to allow me to show their details on the map, it became a little trickier still.

AI tools cannot “see” the websites they create. Which explains why some stuff they do really well, and others that you could give to an undergraduate RA to sort out, they struggle with.

Basically, Claude told me repeatedly that the dots now don’t overlap and work well, even though they really didn’t. I had to insist for several rounds, iterating the map to get something more useful. In the process, I learned more about HTML, CSS styling, and how to update code documents on GitHub.

What I learned

This was great fun and a bit of a hobby. My next step will be to use proper Claude Code and see if I can automate the updating of the map, now that I am crowdsourcing entries and permissions (including the few people who requested removal from the map — though I strongly suspect they are not on there anyway, but I will need to check).

AI boosters claim that these tools are super powerful. The AI doomers sometimes may actually believe that they are more powerful than they really are, and end up either paranoid or disappointed. It is clear that there is software on the web that restricts AI access (robot.txt and other tools, which Claude respects), and that the way that AIs can interact with the digital world is not the same as humans.

AIs read code, humans see the visualisation that the code creates. So AI solutions tend to be more overwrought than human ones, but that is balanced by processing speed.

Does it save time? Maybe, but all of these examples are things that I would never have done manually. This is really more about expanding what I can do, and figuring out where and how that would be useful.

Hopefully, this was useful to you, and do try out some of this stuff. Beats playing video games for entertainment in my view!