Is AI taking over social science research? Part 1

Both a qualitative and a business & management perspective on the recent big debate whether AI is going to take all our jobs (yes, academic jobs, too)

If you missed it, you are either insufficiently online, or you spend too much time on slop rather than on the academic internet… I leave this determination up to you.

And in light of how widely (and fiercely) debated this topic became at the start of March, this Friday's post is free to read, because I think this has been quite a lopsided debate.

Lopsided because it has been dominated by the quants, who are (perhaps predictably?) seeing that their methodological skills are more substitutable than they realised.

Also lopsided because the social science debate has been dominated by political scientists and economists. That one had me a little surprised — where are all the other social scientists? Especially business and management?

As this is quite a big debate right now, this Friday’s post won’t have a paywall.

AI will take all your jobs

The frenzied debate is, of course, easiest to understand as part of the wider debate around whether we humans and our means of survival, otherwise known as jobs/employment, are at stake. As Richard Elsom, aka the AI Archivist, pointed out on LinkedIn, it is mostly people who do not do our jobs and know nothing about them who are busy telling everyone that they will soon be obsolete.

The FT’s AI Shift newsletter regularly digs into claims about AI-induced job-maggedon, and its two authors have currently settled on:

Economic data shows that job losses are not due to AI, because you would expect the productivity of the remaining workers to increase — guess what, that is not happening.

There is one caveat to this: Software and Apps have been shipping at a noticeably higher rate since 2025. So the Claude Code effect is real. But by now, you have probably heard about the Jevons Paradox: greater efficiency of a resource leads to greater usage or consumption. So this may not even spell doom to coders. (Also, Google “demand elasticity” and look smart in the next AI debate.)

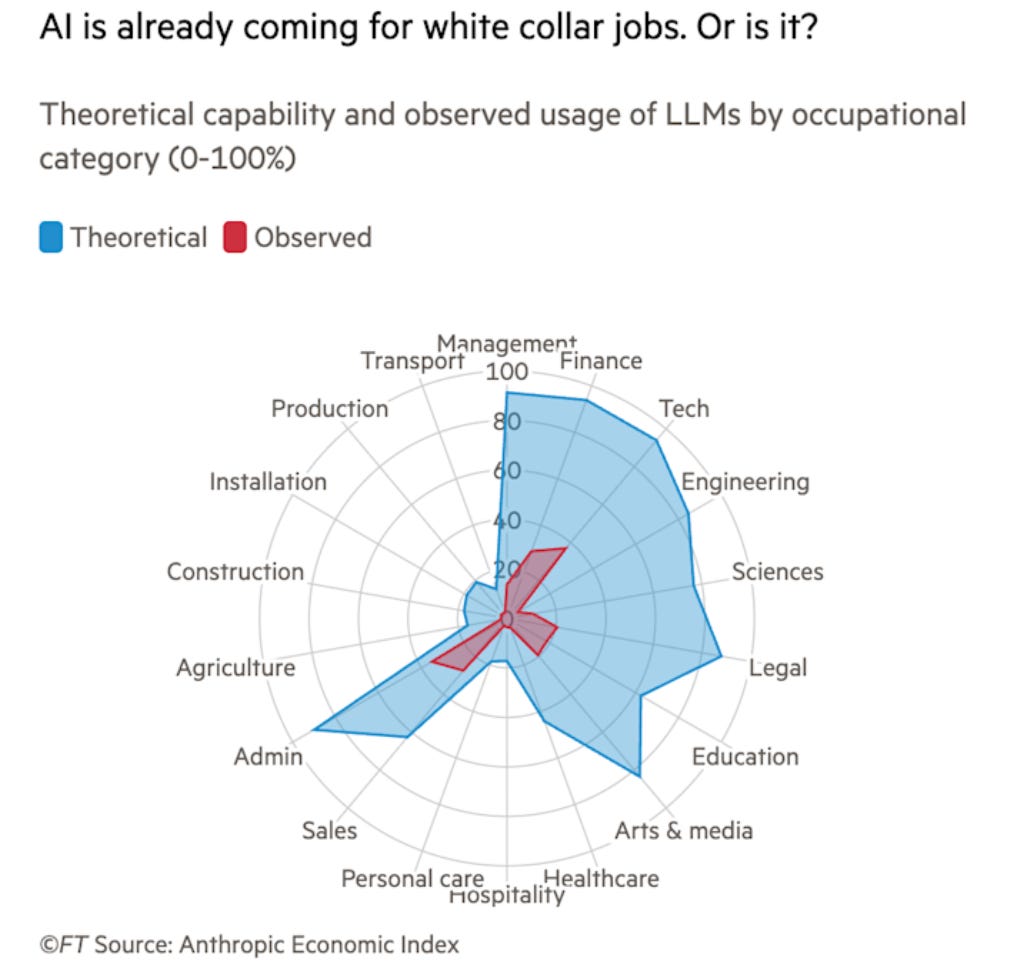

Most papers examining “at-risk” occupations operate on the principle of breaking jobs down into discrete tasks and tracking whether an AI can perform them. But, for the most part, our jobs are not bundles of discrete tasks. Sorry, but simple analytics only get you so far. Also, here is a neat Spider diagram by the FT that will either totally reassure you or give you the willies, depending on what your general inclination is anyway:

As a historian, you may note many a tortured analogy with past technological revolutions. Electrification and the introduction of spreadsheet software are popular — see the AI as Normal Technology and a previous post here. But the prize for best recent historical analogy certainly goes to David Oks’ elaboration of bank tellers — ATMs — the iPhone. (Worth a read! Click the image below for the full post.)

So, this is a short summary of the general moral panic. But it is so much more entertaining when the argument erupts about your own job.

AI will write your social science papers for you

You may know the post that kicked off the Big Debate. But I want to plug the FT’s AI Shift newsletter again, which discussed how good AI has become at doing quantitative social science analysis. Journalist John Burn-Murdoch reflected that, now that gruntwork can be done by AI, more clever people may be able to run more analysis more quickly, who previously maybe did not have the skills to use R or Python or the time to clean the data. And, of course, then John and Sarah O’Connor discussed the concomitant “AI brain fry”, the exhaustion you get from the speed with which AI solves your problems as your work intensifies.

But, you know, other people…

And then the Substack post that riled most of Bluesky (apparently, how would I know?) appeared:

Kustov IS a social scientist, so this set the cat among the pigeons. And not just with the title and the graphics, but also with a post hoc disclosure that the first post was written by AI… (based on his social media posts).

It’s a good piece, and it has a Part 2 follow-up. Part 1 made one really outrageous claim:

AI can already do social science research better than most professors.

Elsewhere, probably in the Notes which I cannot currently find, he hedged that this refers to the average and points to some of our favourite high-volume/low-standard “academic” publishers. A bit disingenuous, but the reality is that a few years as an editor makes you somewhat disillusioned with academic standards (by which I mean me, though maybe also Kustov).

In part 1, Kustov opines that he will no longer plan for a research assistant in his future workflows. (Ah, our US colleagues… research assistants, what are those?) His excitement for agents also highlights that he is part of a tradition in which workflows can be easily described and outsourced — classically, this is more the case for quantitative researchers.

Overall, Part 2 is perhaps the more thought-provoking piece, which interestingly puts forward a number of points worth discussing:

“AI exposes what’s already broken in academia and beyond” — honestly, hard to argue with, especially around publishing.

“Qualitative research and novel data collection will increase in relative value” — let’s all rejoice!

The “jagged frontier” is real - but it’s also user skill and the tendency of critics to criticise old outmoded models and to be thoroughly unaware of how good the frontier models are.

Especially the last point is noticeable when it comes to the AI doomers in our field, I am afraid to say.

But, what left me somewhat disappointed with the debate was that while there is much debate of what is broken in academia, specifically in academic publishing (a subject I can wax angrily about at all times), and some useful pointers on jaggedness that are real, very little of this really seems to reflect qualitative researchers concerns, or the realities of business and management research.

So let’s look at some of these hotly contested issues from the perspective of a “qual” researcher in business and management.

What are the real bottlenecks in business research?

On reflection, most people would say it is not about producing more papers. Yet many arguments about AI replacing social scientists focus on its ability to produce papers more quickly, including the underlying analysis and the literature review.

Literature review papers are notoriously difficult to publish, by the way, and this will likely get worse now.

In business and management as a field, I would argue, you can publish everything you want, as there are so many journals, so many of them unranked, some questionable, and, of course, you have the predatory ones. And then you can publish anything on SSRN or MPRA, so publishing per se is not a meaningful bottleneck.

But we all know that this is not the game

The game has always been an institutional one: getting into the right journals (the right journals differ by institution type and country, though we all agree more or less on the elite).

This feeds into tenure, probationary review, promotion, or indeed, into avoiding redundancy.

So this means you need a) the cultural knowledge to know the journals, and this is increasingly codified — more on that in a future blog; b) the harder to obtain cultural knowledge and social capital that defines academic communities and what journals (editors & reviewers) want. This type of academic politics may be distasteful when we consider the pervasive gatekeeping behaviour in academia, but let me get back to that in part 2…

What’s your view on the emerging big AI debate? Leave a comment below.